H200 Bring-up and Naming: How We Stopped Confusing Our Own Receipts

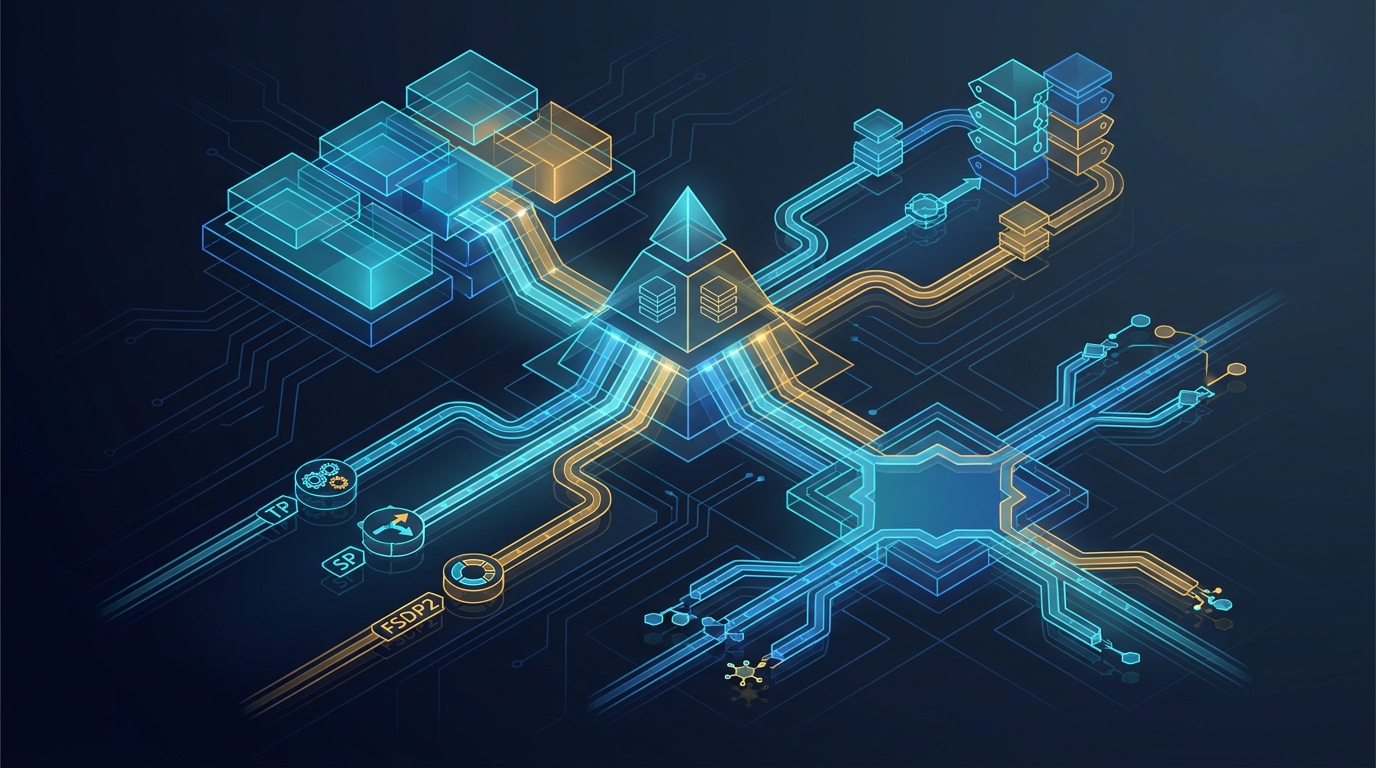

The MegaCpp H200 software stack, the bring-up of TP+SP+EP+FSDP2 on a fresh us-west1-c host, and the naming glossary that prevents two engineers from quoting different runs as the same number.

H200 Bring-up and Naming: How We Stopped Confusing Our Own Receipts

There are two failure modes you keep hitting when you move a serious training

stack from H100 to H200. The first is the obvious one: a CUDA toolkit, a torch

nightly, a flash-attn build, and a fused MoE dispatch path that all need to

land on the same ABI without any of them silently downgrading the others. The

second is unglamorous: the names. The host you ran on three weeks ago, the

preset whose label says dense but means "MoE on, sparse off, mHC enabled",

the lineage tag in a handoff note, the current lane in a benchmark

quartet — none of them carry their meaning on their face, and an experienced

team will still misquote them.

This post is the H200 bring-up story for our stack — the actual stack table,

the install order that survives, and the moves that took the

TP + SP + EP + FSDP2 lane from "compile/hang/forensics" to "step completes,

checkpoint saves" — followed by the naming glossary we now treat as a

contract, not a wiki page.

The stack we actually run

There is a one-page truth for the H200:8 stack. Everything else is commentary.

| Component | Version | Source |

|---|---|---|

| Python | 3.13.12 | deadsnakes PPA |

| torch | 2.12.0.dev20260405+cu132 | PyTorch nightly cu132 index |

| triton | 3.7.0+git9c288bc5 | bundled with torch nightly |

| CUDA toolkit | 13.2 | apt install cuda-toolkit-13-2 |

| NVIDIA driver | 590.48+ | pre-installed on host image |

| flash-attn | 2.8.3 | pre-built wheel (cu132) |

| flash-attn-4 | 4.0.0b7 | PyPI (pip install --pre flash-attn-4) |

| mamba-ssm | 2.3.1 | pre-built wheel (cu132) |

| causal-conv1d | 1.6.1 | pre-built wheel (cu132) |

| torchao | 0.17.0 | PyPI |

| flash-linear-attention | 0.5.0 | git HEAD with --no-deps |

| fla-core | 0.4.2 | PyPI with --no-deps |

| dualpipe | 1.0.0 | PyPI |

| cutlass-dsl | 4.4.2 | bundled with flash-attn-4 |

| quack-kernels | 0.3.9 | bundled with flash-attn-4 |

| qoptim-cuda | 0.0.0 | pre-built wheel (cu132) |

| cuDNN | 9.20.0.48 | bundled with torch nightly |

| NCCL | 2.29.7 | bundled with torch nightly |

| nsys | 2025.6.3 | bundled with CUDA toolkit |

| gcc | 11.4.0 | system (Ubuntu 22.04) |

The pre-built CUDA wheels are built once on a machine that has the cu132 toolkit installed and republished as artifacts; they have no pip dependency metadata, so installing them does not pull torch back in and does not have a chance to downgrade the nightly.

Disk layout

Everything that grows lives on /mnt/data, with friendly symlinks under

$HOME so the operator's shell history keeps working:

/mnt/data/

venv/ # Python 3.13 virtualenv

nanochat/ # repo

data/ # training data

parquet/

~/venv -> /mnt/data/venv

~/nanochat -> /mnt/data/nanochat

~/data -> /mnt/data/data

The "everything on /mnt/data" rule is not aesthetic. The root disk on a

rented GPU host is small, and pip caches plus a single torch nightly will

exhaust it during a build. We have lost a host to a full root volume more than

once. The symlinks make this invisible to scripts; the layout makes it

invisible to disk pressure.

Install order matters

The single most expensive lesson on H200 bring-up is that pip will silently

downgrade torch if you let it. Several packages — most aggressively

mamba-ssm, causal-conv1d, and flash-linear-attention — declare torch

as a runtime dependency. If you pip install them from PyPI without --no-deps,

pip happily replaces your cu132 nightly with whatever stable build it can

resolve, and the next training launch crashes in torch._inductor because the

ABI no longer matches your fused kernels.

The order that survives is:

- Create the venv on the data disk and update pip/setuptools/wheel.

- Install the cu132 torch nightly first. This sets the ABI baseline.

- Install the four pre-built CUDA wheels (

flash_attn,causal_conv1d,mamba_ssm,qoptim_cuda). They have no dependency metadata; they cannot touch torch. - Install

flash-attn-4from PyPI. It is pure Python and JITs CUDA at runtime. - Install

flash-linear-attentionandfla-corewith--no-deps. This is the trap. - Install the rest (

torchao,dualpipe, then the data and tooling packages). These are torch-safe. - Verify torch was not downgraded:

python -c "import torch; assert 'cu132' in torch.__version__".

If that assert fails, stop. Do not "just try training" to see if it works. The symptoms of a partial downgrade range from immediate import errors to a subtle numerical drift that only shows up after 1k steps, and you will spend the rest of the day chasing the wrong bug.

Bringing up TP + SP + EP + FSDP2

The bring-up that took the most receipts was the four-way composition: tensor parallel, sequence parallel, expert parallel, FSDP2. On a fresh H200 host, with our recipe, this composition initially looked like a torch/Inductor problem. It was not — or rather, it was not only one. The two real bugs were inside our own training script.

The first bug was an EP-active predicate. The launch used --expert_parallel=2

with --expert_tensor_parallel=0 (which means "follow TP"). Our wiring

computed "EP is active" from expert_tp_mesh is not None, which stayed None

in this configuration. So the model thought EP was off, attached a manual SP

hook to inner.mlp, and expanded the router input from a local shard back to

the full sequence. The collective looked legal; the math underneath it was

wrong, and the hang showed up later as an Inductor assertion. The fix was to

pass the real expert_parallel_degree into _apply_cuda_tensor_parallel()

and compute "EP active" from degree > 1. After the fix, the trace showed

ep_active=True ep_local=True manual_sp=False ep_lane=True, no stale hooks,

and a clean router_in shape of (1, 128, 128).

With that fix, the eager TP + SP + EP + FSDP2 lane reached

compute_loss return, optimizer_step_done, and a clean checkpoint save in

a single step on a fresh us-west1-c host. Multi-step (num_iterations=5)

passed the next day, no hang, no collective failure.

The second bug was the optimizer's notion of effective DP size when PP was

added. The pre-existing path computed effective DP as

world_size / (tp * ep) — correct without PP, wrong with PP. With PP turned

on, the right divisor is world_size / (pp * tp * ep), and PP + TP

singleton-DP lanes were silently being routed to the wrong distributed Adam

path. After threading pp_degree through the optimizer routing, the

PP + TP + SP eager lane on depth=4 reached step 2 of 3 with a clean

checkpoint save and no traceback.

Compile, then the next compile bug

Eager correctness is half the work. Compile expansion was its own gauntlet.

PP + TP + SP + compile on depth=4 passed once we stopped duplicating the

PP process group when the pre-PP DP group already covered the right rank

membership; reusing the existing dp_process_group as _pp_group removed a

long-tail hang in dist.new_group.

The next blocker was a torch pipelining detail. Schedule1F1B's _batch_p2p

intentionally degrades homogeneous send/recv batches into raw dist.isend /

dist.irecv, which trips the unbatched-P2P warning during eager-init

neighbor handshake. The workaround is local: inside the PP schedule.step(...)

window, we override _batch_p2p to force dist.batch_isend_irecv(p2p_ops),

gated by NANOCHAT_FORCE_BATCHED_PP_INIT_P2P=1. After the override, the

clean run shows steady-state steps around 200ms with no unbatched-P2P

warnings in the receipt slice.

The last failure class — both a fallback and a decomp for same op: aten.index_add.default — narrowed cleanly through ablations. Dense GPT under

TP + SP + FSDP2 + compile passed. MoE combine alone, MoE

router-gather-combine, all passed under compile. The standalone real

TokenChoiceMoELayer was the failing primitive. The fix was twofold:

disable the Megatron-permute padded branch on the compiled CUDA path by

default, and move EP combine() out of the compile-sensitive region with a

selective @torch.compiler.disable. After that, the real base_train

TP + SP + EP + FSDP2 + compile lane passes on H200, and the active frontier

moved from correctness to scaling and recompile behavior.

Moving from H100 to H200: the gotchas

On paper, H100 → H200 is "more HBM, otherwise the same". In practice, the gotchas that bit us were not silicon, they were build matrix:

- Torch nightly cu132 wheels for flash-attn, mamba-ssm, and causal-conv1d are not on PyPI. You either build them once and republish as wheels, or you spend 10–15 minutes per package on every fresh box compiling from source.

- A cold inductor cache has different first-compile latency on H200 than on H100 because the kernel set is different, which is why the multi-GPU cold-cache hang we wrote about separately shows up earlier on H200 hosts with rich recipes than on a stripped H100.

- The

gcc 11.4baseline is fine for everything we build, but newernvccfeatures will warn if you try to push togcc 13. Stay on 11.4 unless you have a concrete reason.

The HBM headroom on H200 changes which recipes fit. A configuration that OOMs on 80GB H100 with sequence length 8K may run cleanly on H200 at 16K with room to spare; we now publish recipes with the HBM pressure noted, because "works on our H200 box" is a claim that does not transport to an H100 reader.

The naming glossary

The reason we wrote a glossary at all is that the same English words mean different things in our preset names, our log labels, and our handoff notes.

Hosts. The old March 2026 H200:8 box in europe-west4-a and the current

us-west1-c bench and bring-up boxes are different runtime surfaces — same

GPU class, different software histories. We use distinct host names per box

rather than abstracting them into "the H200". Old reports that say "the H200"

almost always mean the historical Europe host; current bring-up logs almost

always mean a us-west1-c host. Comparing two tok/sec numbers across these

is comparing two systems.

Presets. The most consequential trap is the word dense. Our

nam52_h200_dense_ref_v1 preset means dsa=False — sparse attention off —

and not "no MoE". The MoE stack is on; the receipted March 2026 baseline

also carried mHC with mhc_n_streams=4. We now also publish

nam52_h200_moe_no_dsa_ref_v1 as an alias of the same preset with the

intended meaning spelled out, and nam52_h200_dense_no_mhc_recovery_v1 as

the explicit bring-up/bisect helper with mHC deliberately disabled. The

pinned NAM52 sparse support-region presets all live on nem_dsa_layers="8,9,10,11"

over the 52-layer AEME substrate (4 sparse A-ranks, 9 full-attention

A-ranks); the NAM52R production candidate uses pattern AEMEAEMEAEMR with

the inverse mix (4 full, 9 sparse). Despite both having a historical dense

key in some preset names, they are not all-dense and they are not the same

attention substrate. We added nam52r_h200_4full_9sparse_candidate_v1 and

nam52r_h200_prod_candidate_v1 as aliases that read correctly without

context.

Log labels. The word current means "the baseline recipe used in this wave",

not a timeless contract, and it is also unrelated to the --kernel=current

loss-kernel option. The word head means "the run was launched from the

repo HEAD on that host" — useful in current-head provenance reports for

comparing the live repo against an older receipted lineage on the same

runtime surface. The lineage tag v19c is operator shorthand for the

March 19, 2026 H200 wave — it is not a preset family or a stable launcher

contract, just a tag on a slice of receipts.

The practical rule

Before any two H200 numbers get compared, three things have to be identified

explicitly: the host lineage (old Europe canonical or which current

us-west1-c host), the preset/runtime family (dense no-DSA baseline, NAM52

pinned support-region sparse, or NAM52R 4 full / 9 sparse), and the launch

regime (Megatron, DDP, FSDP2, no-compile, regional-compile, and so on).

Anything missing one of those three is a rumor. Anything carrying all three

is a comparison.

References

H200_STACK_SETUP.mdH200_NAMING.mdtp_sp_ep_fsdp_h200_bringup_2026-04-07.mdMODAL_MULTI_GPU_STATUS.md