Engineering Blog

Technical deep dives from the Datasunrise OÜ team. No marketing fluff—just honest takes on training infrastructure, vector databases, code search, and what it actually takes to build AI for C++ engineers.

SLM Ensemble Architecture: 8 Specialists, One Coherent System

How our ensemble of 8 specialist C++ models (4B-8B params, 0.8B-1.6B active) is organized. Hybrid Mamba 3 + Transformer stack, expert routing, and why this beats a 70B generalist for real C++ work.

Training the SLM Ensemble: Muon, FP16, and 100-200B Tokens per Specialist

The training recipe grounded in our nanochat POC and cppmega stack: Muon optimizer, FP16 training, curriculum, and what survived contact with real GPUs.

The C++ Corpus: Data Curation for 8 Specialists

Corpus curriculum, tokenization, document masking. Why 'just train on GitHub' is not an answer for C++ and what the data pipeline actually looks like.

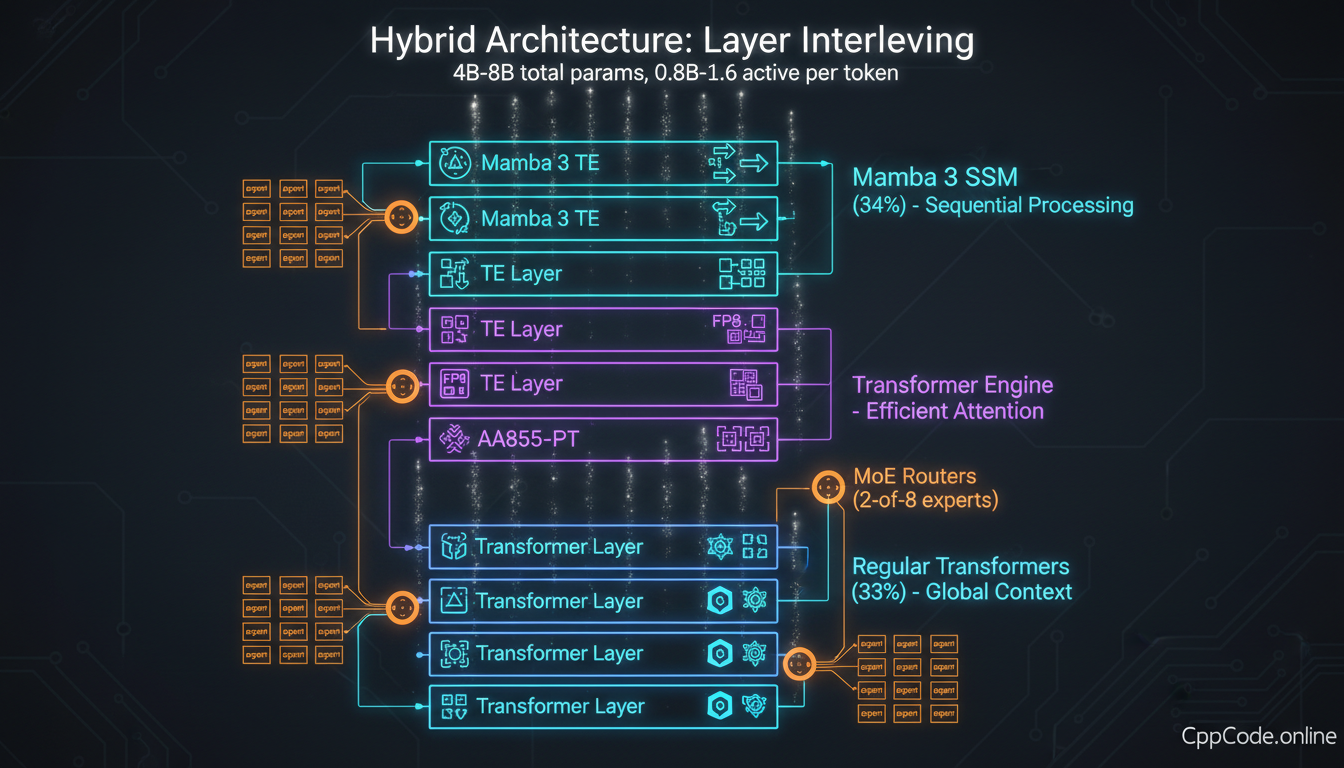

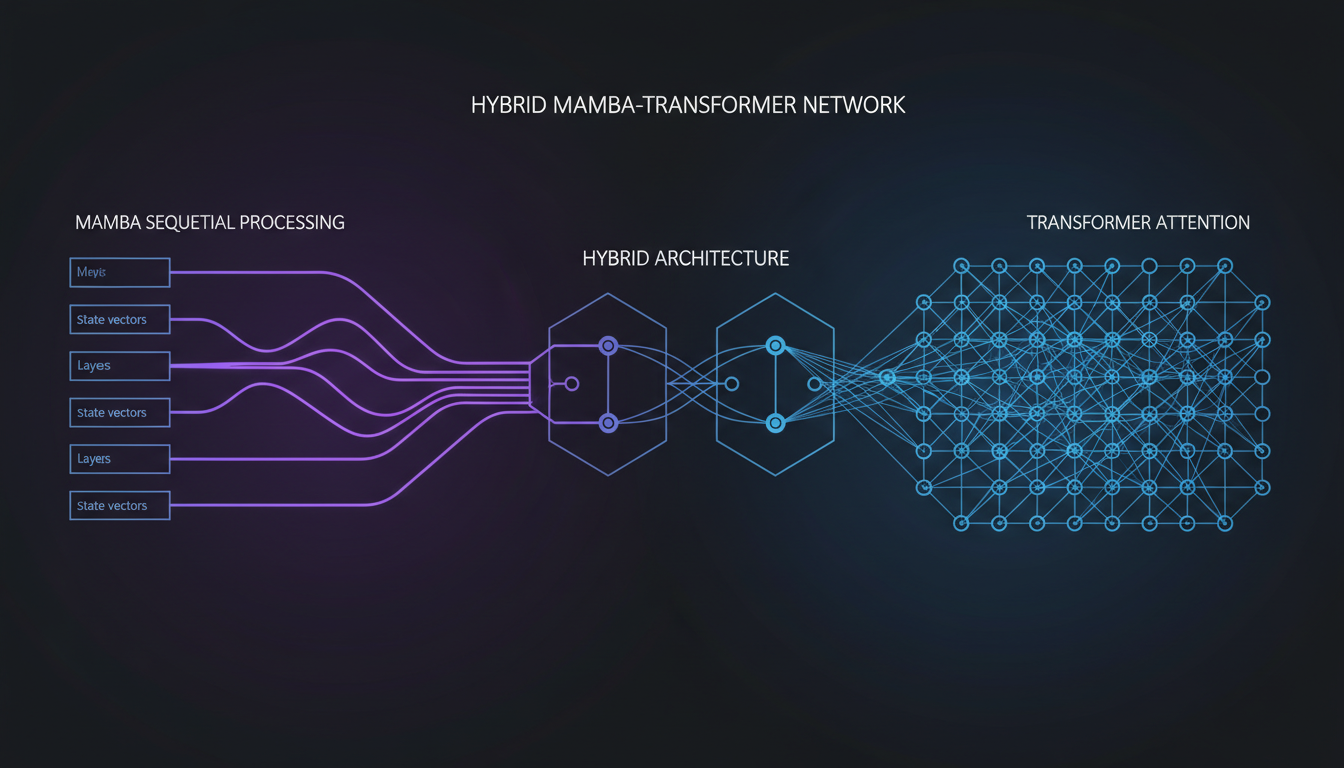

Mamba 3 + Transformers: The Hybrid Stack We Actually Ship

PSIV cache, MIMO, register split, and the layer-interleaving choices behind the MegaCpp models. Why the hybrid beats pure attention on long C++ contexts.

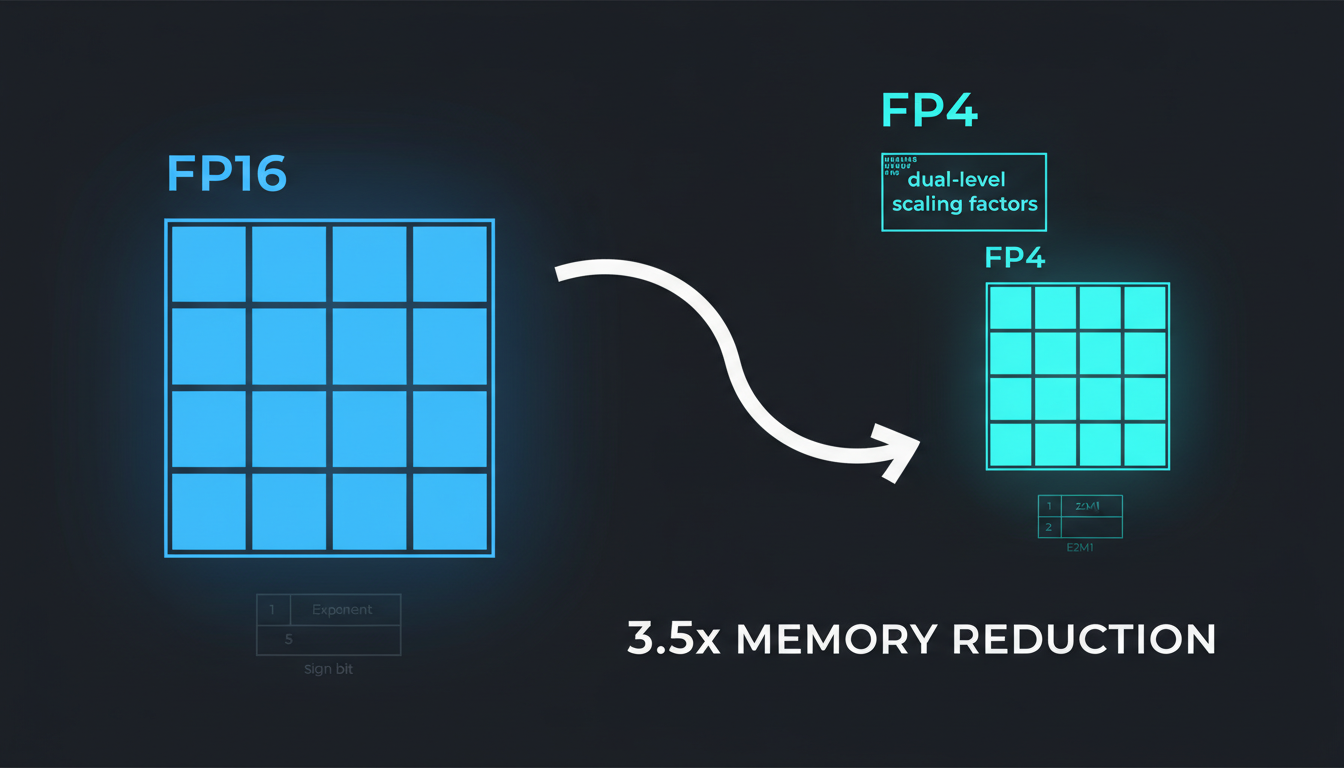

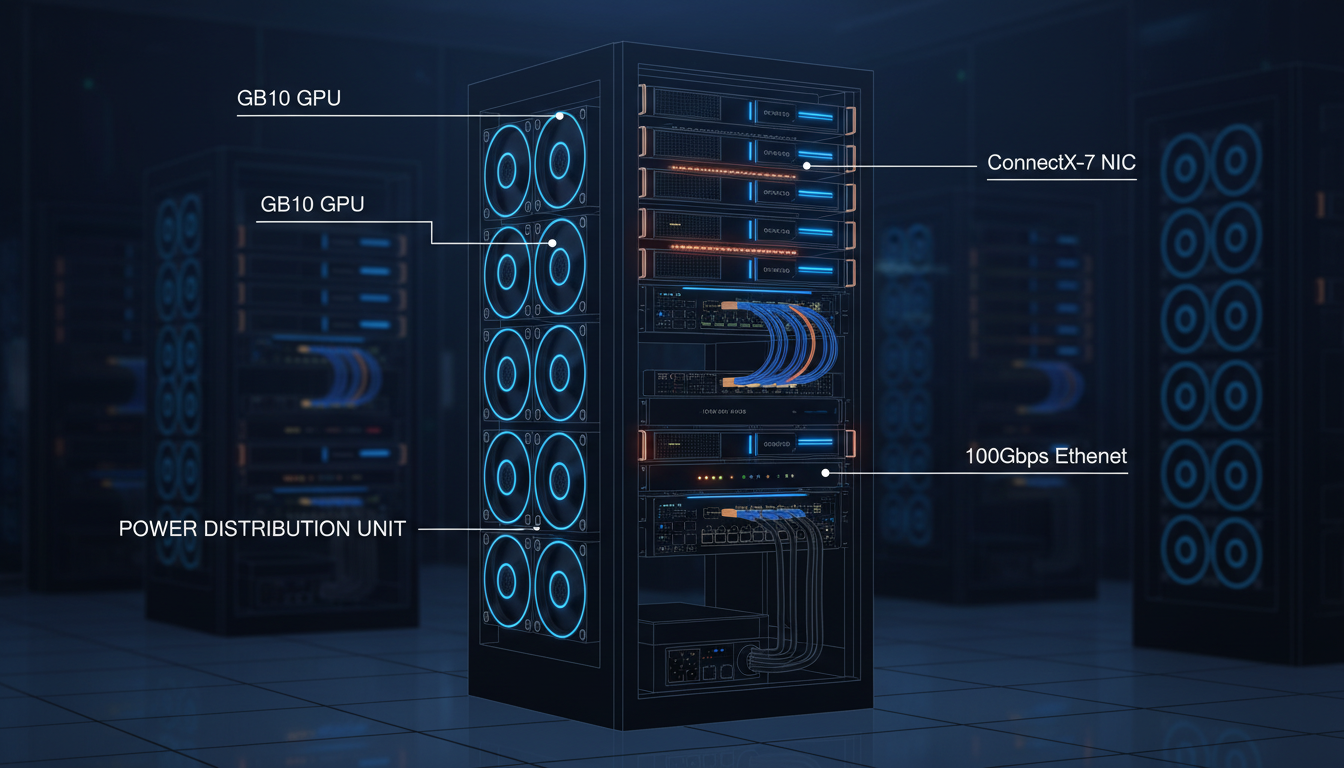

NVFP4 Inference on Blackwell and GB10

FP16/BF16 training maps to NVFP4 at inference. Kernel selection, CUTLASS block-scaled MMA, GB10 vs B200 capability split, and the pins that make it reproducible.

Meet the 8 Specialists

Profiles of the 8 models in the MegaCpp ensemble: algorithms, templates, memory, concurrency, systems, build, debug, STL. What each is good at and what each is not for.

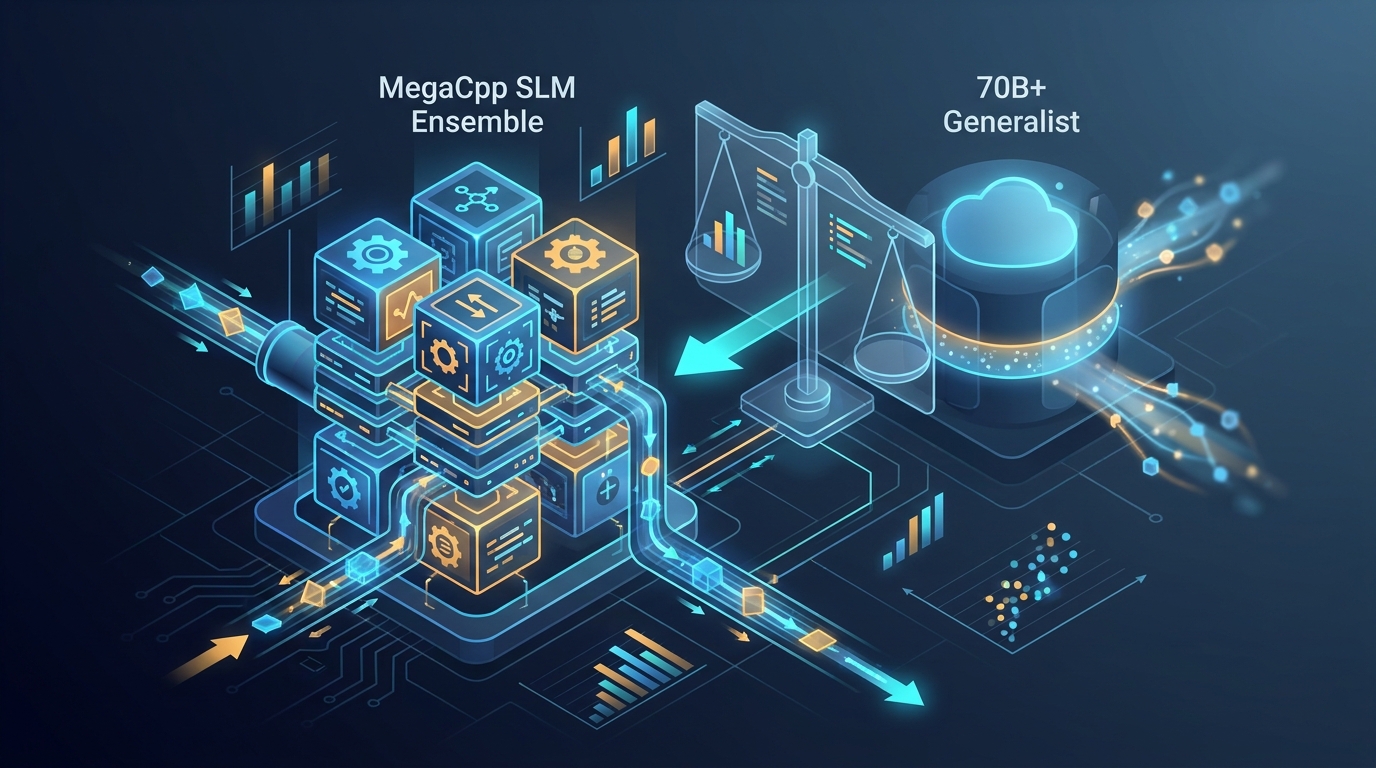

How We Evaluate: Four-Axis Eval Beats Vibes

Compile, context adherence, hallucination, correctness. The harness, the benchmarks, and the cost-per-quality argument for the ensemble vs 70B generalists.

GB10 War Stories: sm_121a, NaNs, and Honest Numbers

What it actually took to get clean training on GB10: ptxas, Liger graph breaks, NVFP4 crashes, a NaN bisect that exonerated the code, and the three pinning rules we would keep.

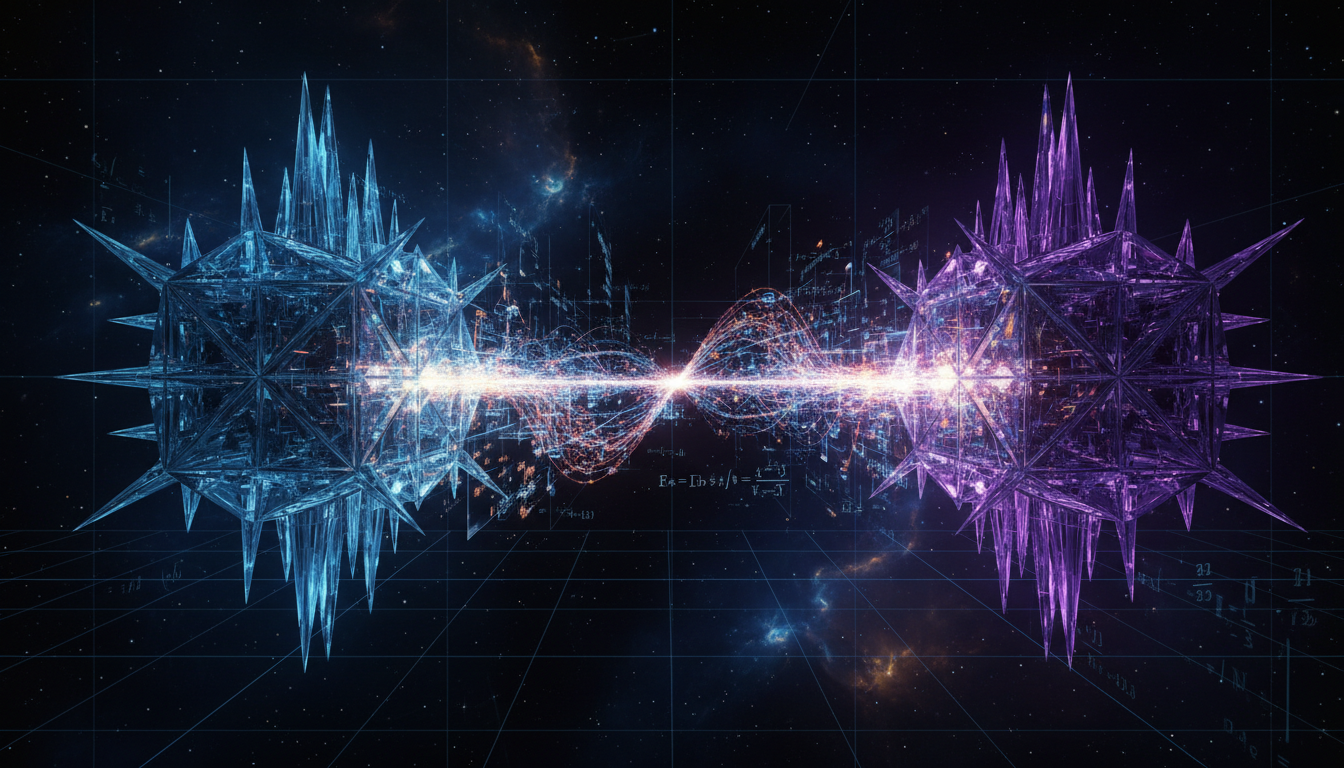

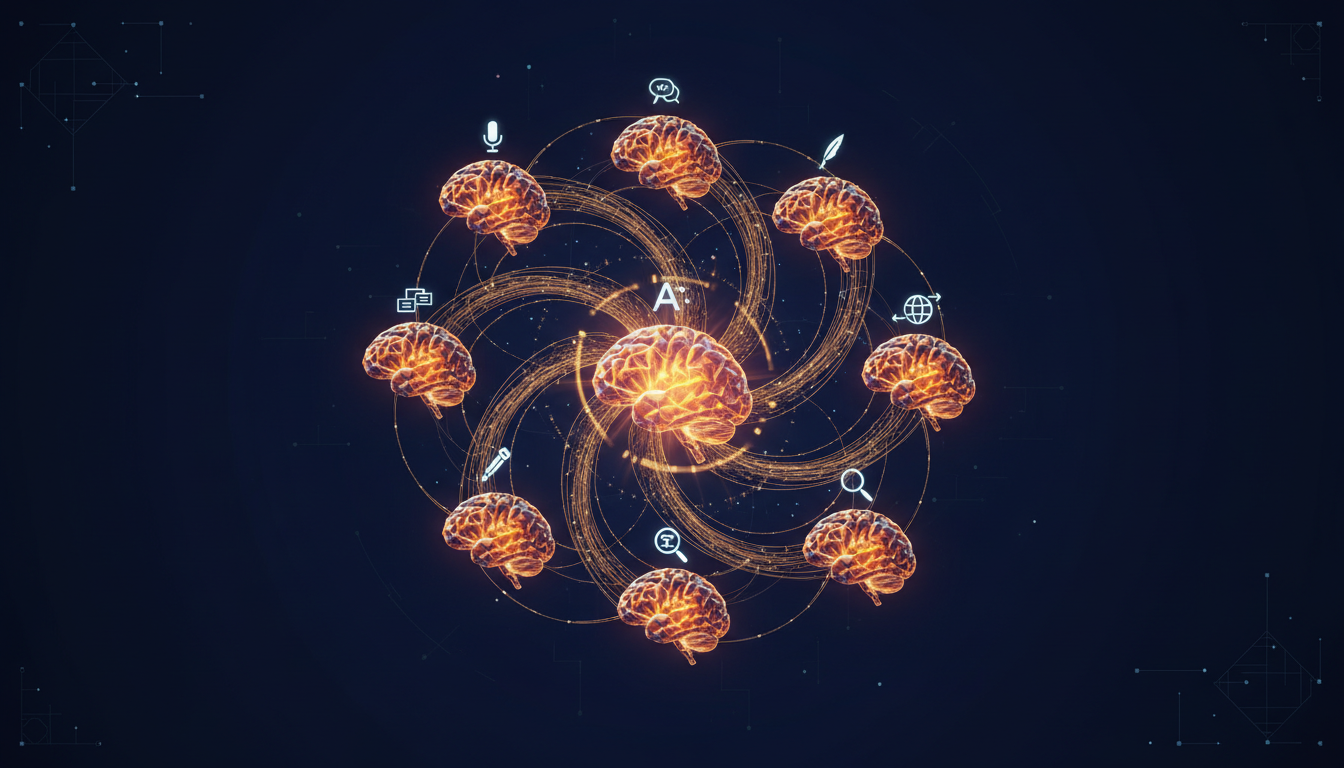

The Latent Bridge: How Our 8 SLMs Talk Without Words

Why we ditched token-based inter-model communication for direct semantic vector channels. Inspired by recent research on cross-model latent bridges, our 8 specialist SLMs now share meaning at latent speed.

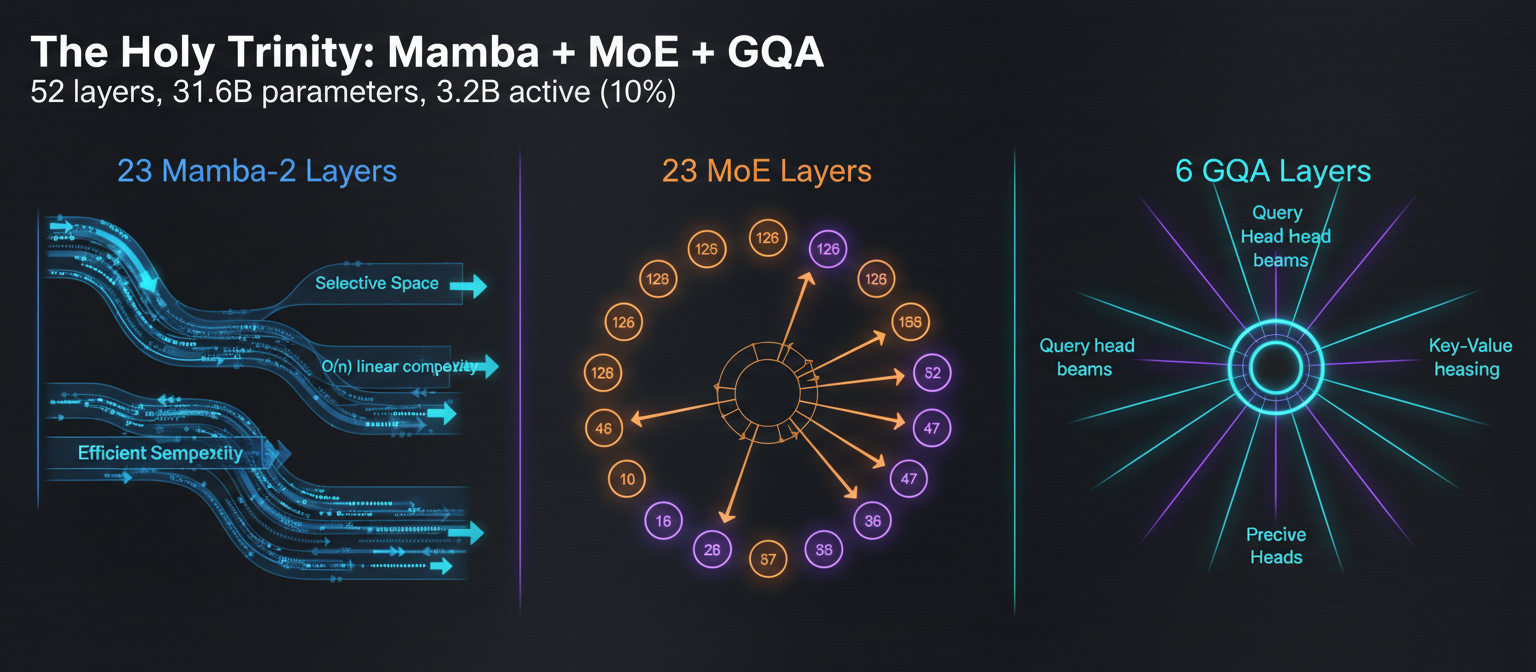

Nemotron Nano 3: The Holy Trinity of Efficiency (Mamba + MoE + GQA)

How NVIDIA combined Mamba-2 state spaces, sparse MoE, and GQA attention to create a 31.6B model that activates only 3.2B parameters. The architecture that inspired our SLM ensemble.

Implementing Mamba 3 in Production: A Practitioner's Guide

Everything we learned deploying Mamba 3 state-space models for C++ code generation: hardware requirements, Tensor Engine integration, memory efficiency, long-context handling, and real performance benchmarks.

Mamba 3: The State Space Revolution (And Why We Use It)

A first-principles journey through Mamba 3's innovations: trapezoidal discretization, complex dynamics via RoPE, and MIMO formulation. From mathematical intuition to real-world performance.

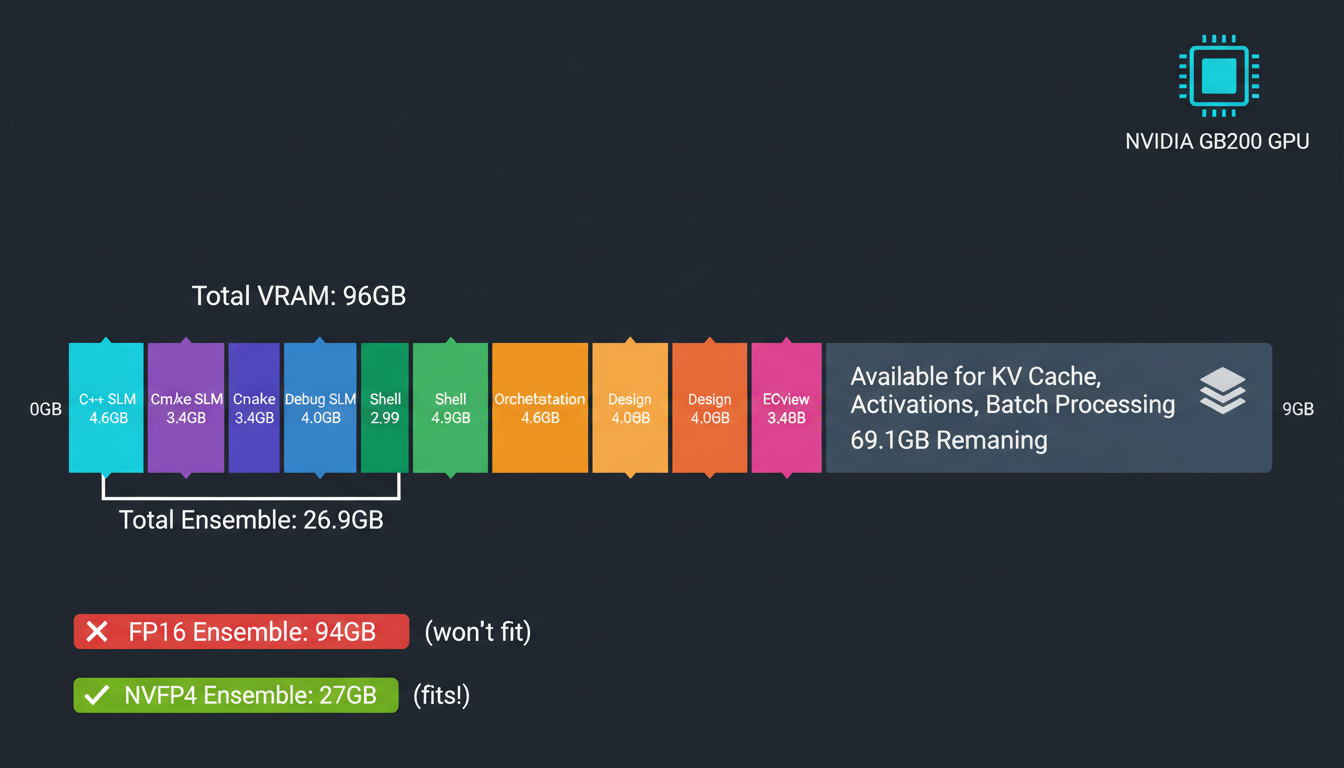

The 4-Bit Miracle: How NVFP4 Squeezes 16-Bit Intelligence into 4-Bit Memory

NVFP4 achieves the impossible: 3.5x memory reduction with less than 1% accuracy loss. Learn how dual-level scaling enables running 7 specialist SLMs on a single GPU.

Training at 4-Bit: The Research That Broke the Rules

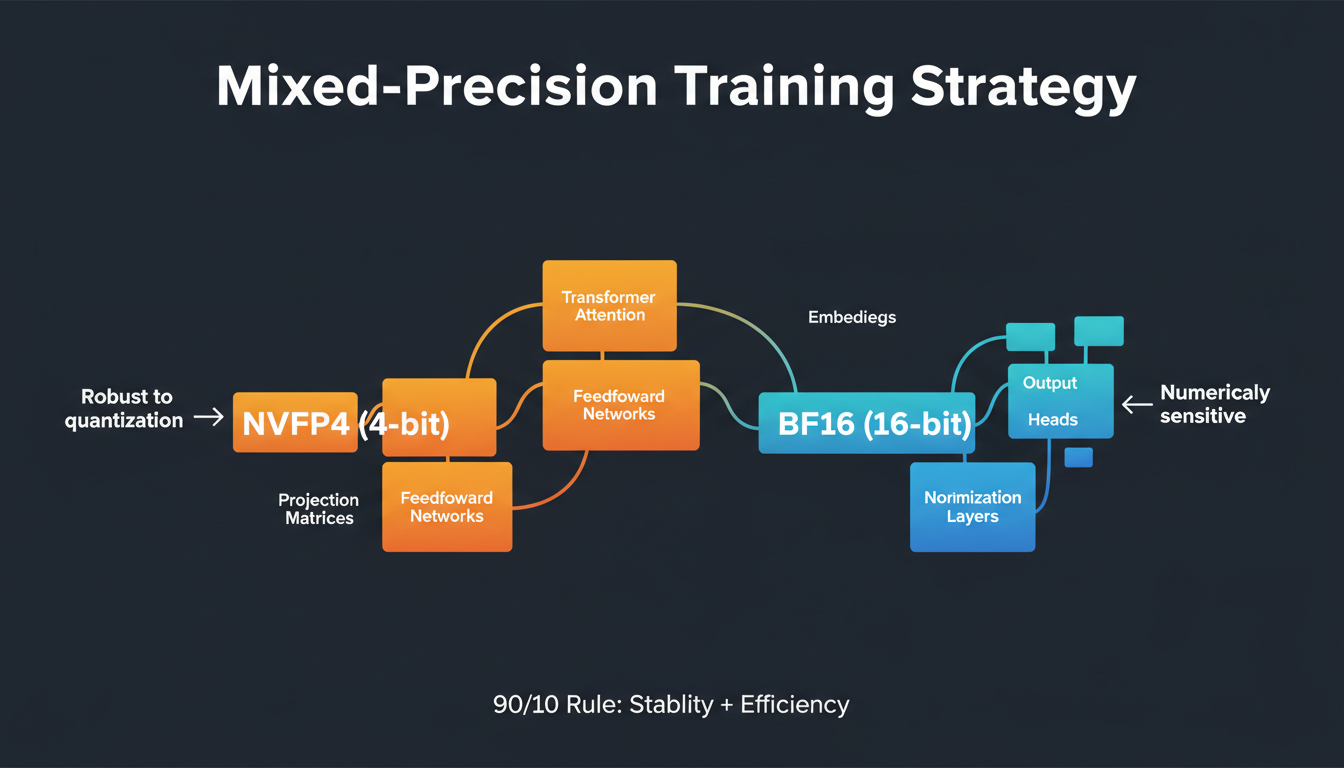

NVIDIA demonstrated the impossible: training a 12B model on 10 trillion tokens at 4-bit precision. How mixed-precision strategies and the Muon optimizer enable FP4 training for our SLM ensemble.

Building Production SLMs with NVFP4: A Practical Guide

Hands-on guide to quantizing and deploying specialized language models with NVFP4. PTQ workflows, QAT strategies, and how we fit 7 models (50B params) in 28GB.

The Magnificent Eight: Why We Built 8 Tiny Models Instead of 1 Big One

Specialist vs generalist models: why 8 models with 4B-8B parameters each (0.8B-1.6B active) outperform single 70B+ models for C++ engineering. MoE architecture deep dive.

Mamba Meets Transformers: Our Hybrid Architecture That Shouldn't Work But Does

Combining Mamba 3 state-space models with Transformer Engine layers and regular attention. A technical deep dive into a hybrid architecture that defies conventional wisdom.

The Honest Truth About Training AI on GB10: Our Grace Blackwell Journey

Why we use NVIDIA GB10 (DGX Spark) clusters with 128GB unified LPDDR5X memory, 1 PFLOP FP4 performance, and the economics of desktop AI supercomputers vs. cloud.

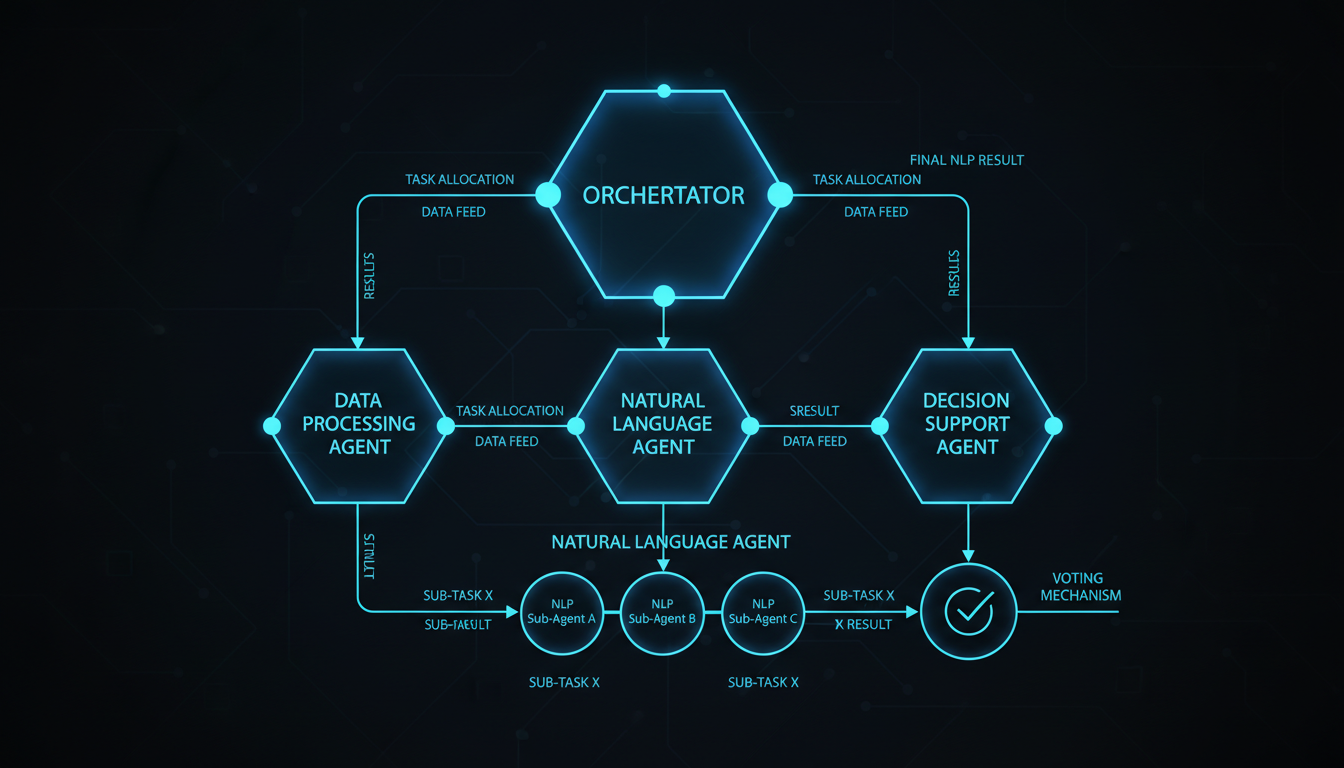

A Million Steps Without Falling: What We Learned About AI Agent Orchestration

An agent that makes a 0.1% error rate sounds great until step 1000 when you realize you're debugging garbage.

Why We Train Small Models That Actually Understand C++

GPT-4 can write hello world in any language. Our models know why your template metaprogramming is broken. Learn how 4B-8B specialist models outperform 70B+ generalists.

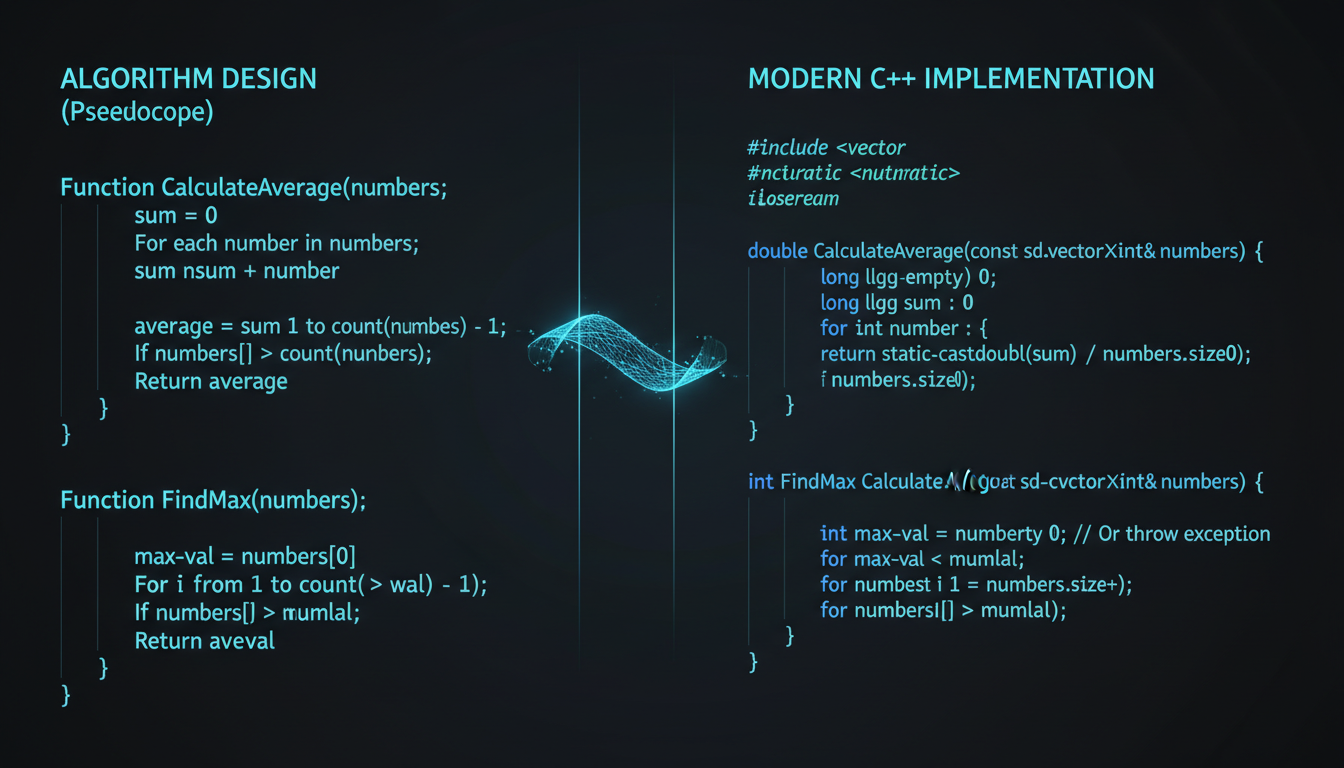

The Algorithm Whisperer: Our Newest SLM Specialist

Meet Algorithm SLM (Algo-7B): our 8th specialist model trained on algorithm design, pseudocode interpretation, and complexity analysis. Why pseudocode understanding matters for C++ development.

Attention Validity and Structure-Aware Attention

A packed-row validity regression, the clustered-sparse follow-up it forced, and the structure-aware attention plan we are integrating into the MegaCpp training stack.

Building the C++ Training Data Pipeline: What Worked, What Broke

An honest walkthrough of how the MegaCpp training data pipeline was built — source selection, filtering, dedup, tokenization, document masking, and the quality gates that catch our own mistakes.

Distributed Optimizer Stress: Drift, All-Gather vs Reduce-Scatter, and Muon Gotchas

Document Masking and the Curriculum: What to Feed Each Specialist First

Why MegaCpp masks documents inside packed sequences, how the four-phase curriculum is shaped from 4K syntax to 64K repository graphs, and what our ablations told us about the right starting diet for each specialist.

Flash Attention 4 in Practice: What We Shipped, What We Cut

Our hybrid stack's applicability matrix for Flash Attention 4, the Build4 validation profiles, the dense-full rollout gates, and the regressions that killed the first FA4 variants before they reached production.

FP8 in the Training Stack: What Shipped, What We Rolled Back

An engineer's account of rolling FP8 through the MegaCpp training stack: DeepGEMM block-scaled GEMMs, torchao Float8Linear, TransformerEngine's FP8-aware activation checkpointing, and the parts that looked good on paper and lost the benchmark.

FSDP2 Pain and Payoff: What Actually Cut Memory on GPU and TPU

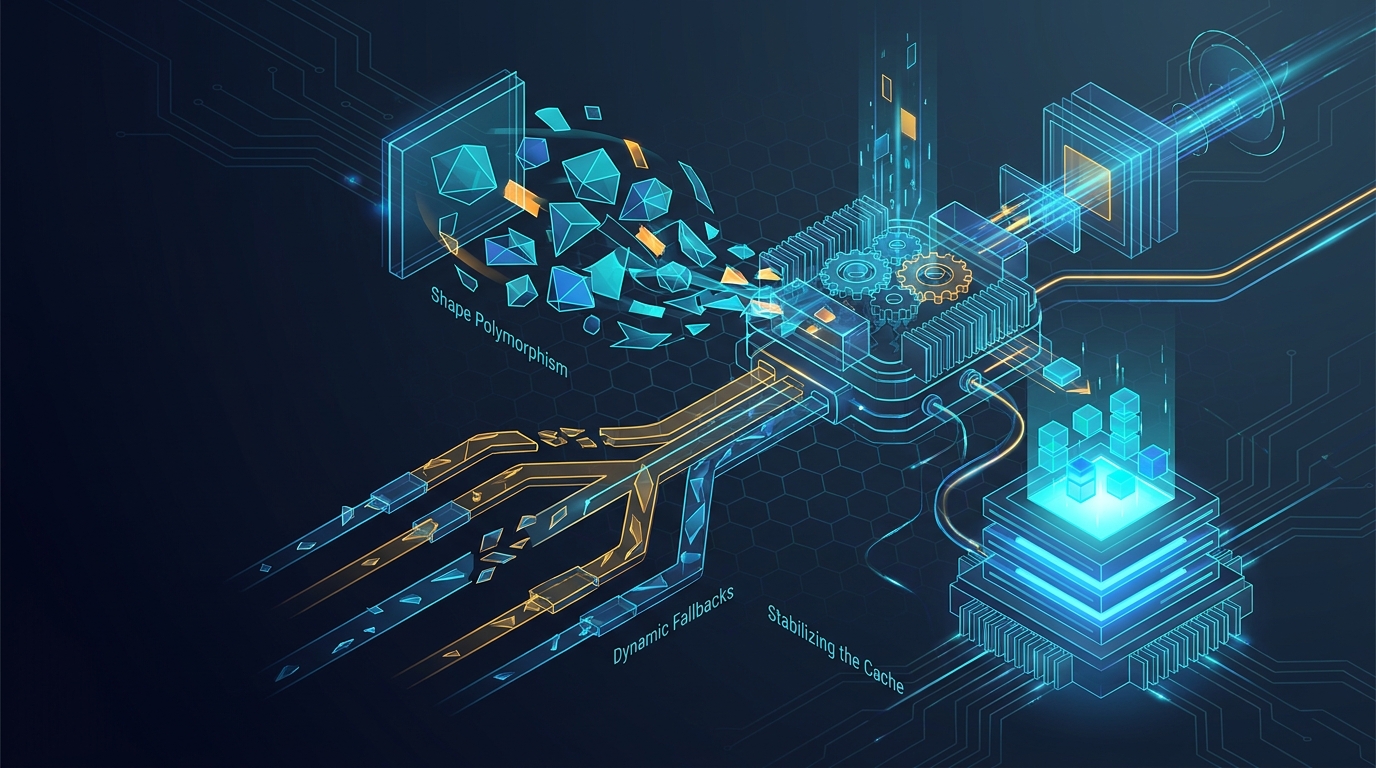

Graph Recompilation Hell on torch_xla: Shape Polymorphism, Dynamic Fallbacks, and Stabilizing the Cache

H200 Bring-up and Naming: How We Stopped Confusing Our Own Receipts

The MegaCpp H200 software stack, the bring-up of TP+SP+EP+FSDP2 on a fresh us-west1-c host, and the naming glossary that prevents two engineers from quoting different runs as the same number.

Landing the Mamba 3 + Transformer Interleave Ratio: What the Ablations Told Us to Throw Away

How the hybrid layer pattern for our C++ specialist converged: AEMEAEDE versus dense versus GDN, what the NAM52 and NAM56R ablations settled, and the features we cut on the data.

libtpu, JAX and torch_xla in One Container: Device Init Races and Env-Var Landmines

What it looks like when a single TPU host runs JAX and torch_xla side by side: libtpu initialization races, VFIO contention, PJRT platform resolution, and the env-var surface you only learn about when it breaks.

Long Context and Attention Sinks: What Actually Held Up Past 16K

YaRN, RNoPE, packed-document masking, attention sinks, massive activations, and query-dependent output gating: a field report on which long-context techniques survived contact with the MegaCpp C++ corpus.

The Mamba 3 Kernel Journey: CUDA, Pallas, TileLang, and a Honest Look at CuTe DSL

How the Mamba 3 kernel stack actually shipped in our nanochat POC: TileLang on H200, Pallas on TPU v6e, a CuTe DSL port that never made it, and the verdicts that came out of each attempt.

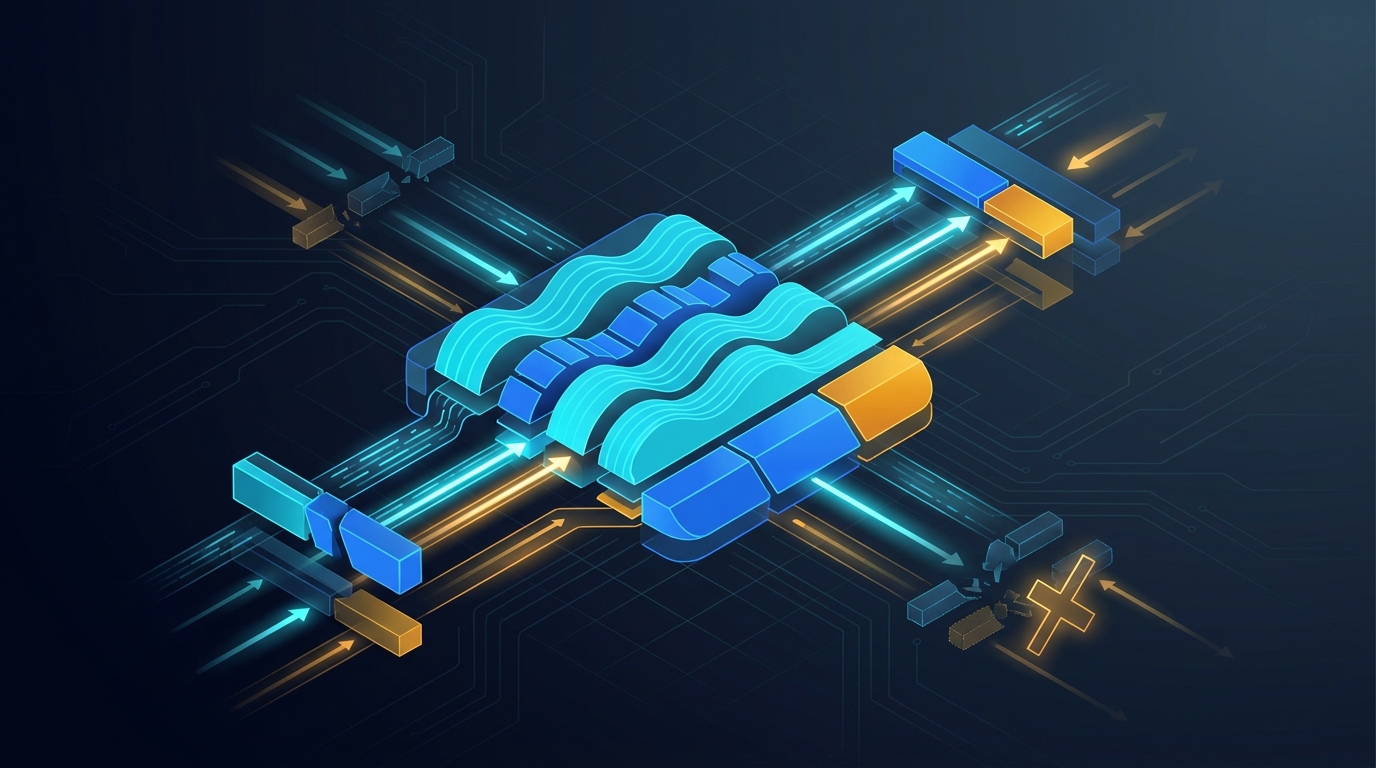

Mamba 3 Parallel Performance: What Beat Attention in the nanochat POC, and Where It Lost

MIMO scaling, block sizes, the PsiV cache trade-off, and an honest tally of where a Mamba 3 hybrid outran pure attention on H200 and where it did not.

MLA Weight Absorption: What We Kept, What We Dropped for the C++ Specialists

Multi-Head Latent Attention in production: why DeepSeek's absorbed decode path is the right choice for KV-cache, why it is the wrong choice for training, and how the C++ specialist ensemble uses both.

The MoE Routing We Actually Shipped

Token Choice vs Expert Choice, null-expert debugging, gating stability, and the production routing decisions behind the MegaCpp SLM ensemble.

Benchmarking the MegaCpp Stack on Modal: Multi-GPU Lessons From Rented Boxes

What we learned running our training stack on rented H100, H200, and B200 boxes through Modal — three benchmark lanes, an 8-GPU FSDP2 hang, and the bookkeeping that lets the numbers survive a week.

OOM Hunting on TPU v6e: HBM Fragmentation, the XLA Allocator, and What Actually Moved Memory

SOTA Ablation and Comparison: How MegaCpp Decides What to Keep

The ablation plan, the comparison methodology, and the honest numbers behind the MegaCpp SLM stack — what stacked, what didn't, and what we threw out even though the paper said it would help.

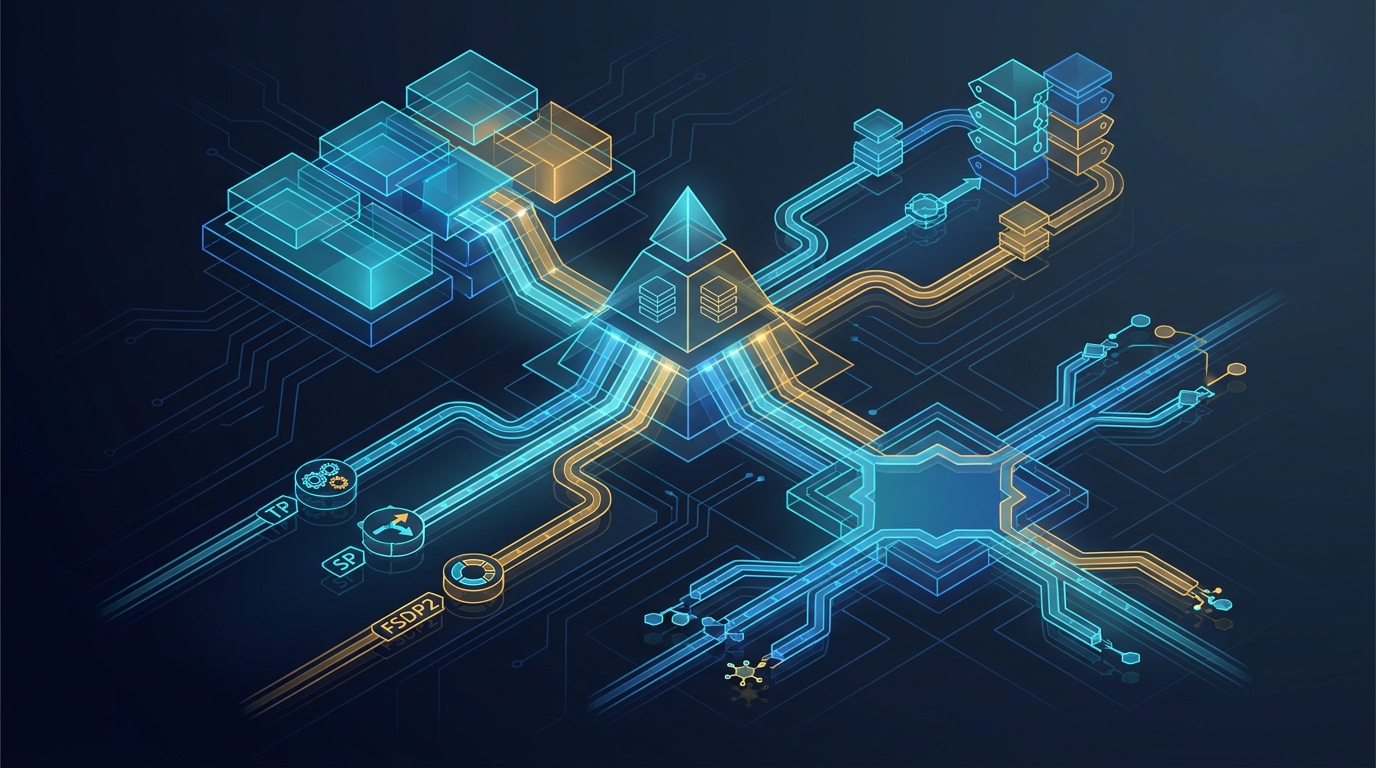

Tensor, Sequence, Expert, FSDP: The Hybrid Sharding Stack and the v6e-8 Bugs

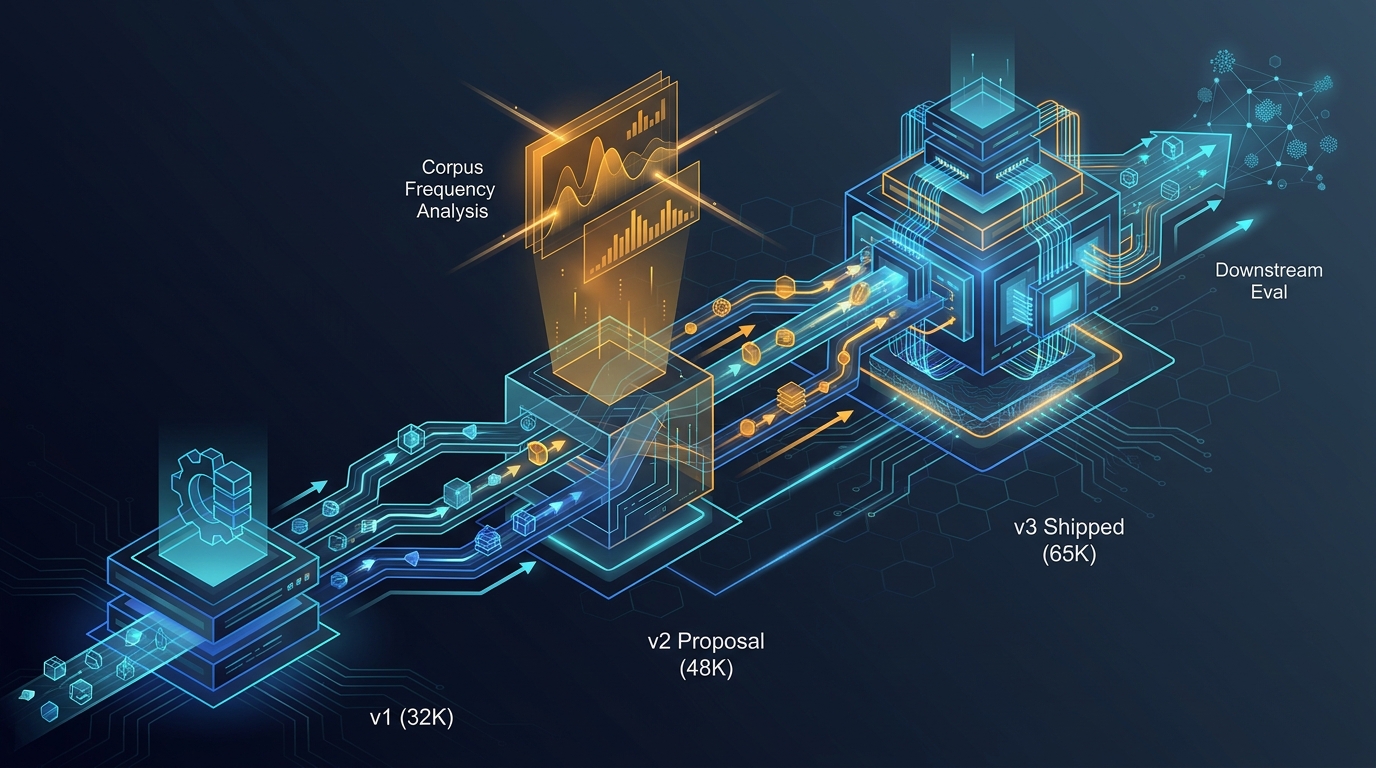

Tokenizer Evolution for C++ Code: From v2 Proposal to v3 Shipped

How the MegaCpp C++ tokenizer evolved from a 32K v1, through a 48K v2 proposal, to the 65K v3 shipped artifact — what we proposed, what corpus frequency analysis told us, and what the tokenizer ended up doing for downstream eval.

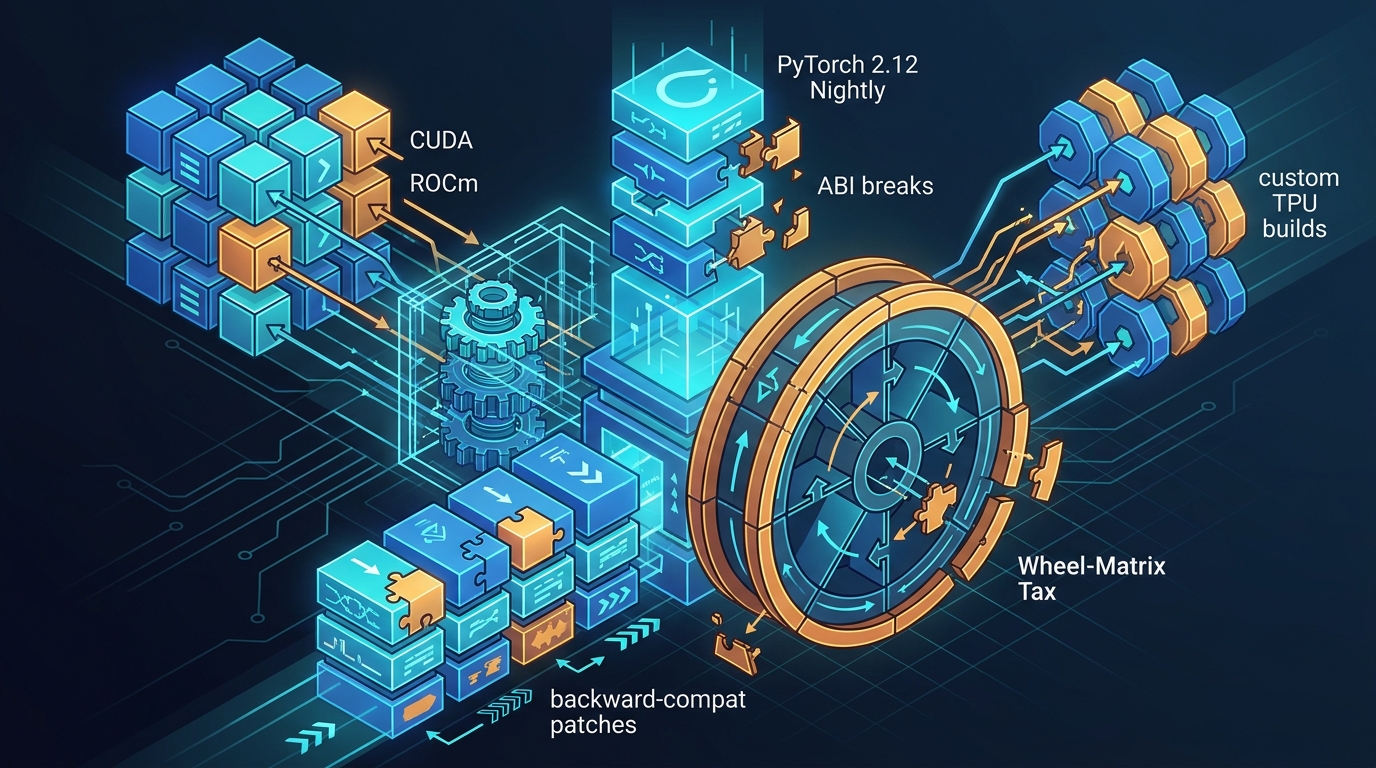

Living on PyTorch 2.12 Nightly: The nanochat ABI and Wheel-Matrix Tax

What it actually cost to sit on PyTorch 2.12 nightlies across CUDA, ROCm and custom TPU builds during the nanochat POC - ABI breaks, API churn, and the backward-compat patches that kept the fleet training.

torch_xla and PJRT on TPU v6e: What Actually Worked

An honest engineering account of running torch_xla / PJRT on Google TPU v6e during the nanochat POC: version pinning, lazy-tensor tracing traps, persistent cache, SPMD, and the pybind we had to ship ourselves.

TPU v6e Performance Deep Dive: Real MFU, Sharding Topology, and the Things That Pretended to Help

Want to learn more about our work?

Check out our product documentation or get in touch to discuss how we can help with your C++ AI needs.